AIエージェントが小売店を運営してみた結果

トレンドから即売品へAI

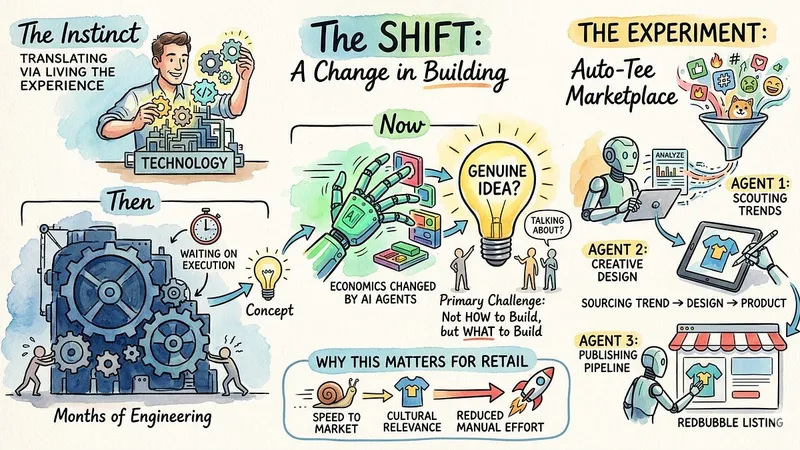

トレンドから即売品へAIソフトウェア開発のスピードがAIエージェントによって劇的に変化し、小売店向けにTシャツのトレンドをデザイン化し販売する実験を行った。

AIエージェントがトレンドの発見からデザイン生成、RedBubbleへの出品まで自動化するパイプラインを構築し、人手による作業時間を大幅に削減。

今後の小売業務におけるAIの活用可能性を示唆する結果となった。

AIエージェントを活用し、Tシャツのマーケットプレイスを自動運用する実験が実施されました。これは、トレンド発掘からデザイン生成、販売リストへの掲載までを一気通貫で行うパイプライン構築の試みです。この試みは、AI技術が小売業界のビジネスモデルやイノベーションのあり方を根本から変えつつあることを示唆しています。

AIによる小売業の変革

これまでソフトウェアのアイデアを形にするには、長いエンジニアリング期間が必要でした。しかし、AIエージェントの登場により、開発の経済性が大きく変化したと筆者は指摘しています。もはや「何かを動かすこと」が最大の課題ではなく、「そもそも何を構築すべきか」という戦略的な問いが重要になっているのです。これは、アイデア出しのフェーズが技術的な実行よりも優先される時代が到来したことを意味します。AIは、小売業が抱える市場投入までのスピードや、文化的な関連性を維持する課題解決に直結する可能性を秘めています。

トレンド発見から商品化までの自動化

実験では、AIエージェント群を用いて、トレンドコンテンツを自動で発見し、それをTシャツのデザインに変換して販売する仕組みが構築されました。このパイプラインは、まず「スカウト」エージェントがインターネットを監視し、Tシャツに適したトレンド(ミームやスローガンなど)を特定します。次に「アーティスト」エージェントが、そのトレンドに基づきオリジナルアートワークを生成します。このプロセスは、人間の手によるデザイン作業や在庫管理を極力排除し、自動で商品化を実現するものです。

エージェント連携の設計思想

このシステムは、GitHub Copilot CLIなどのエージェント開発環境を利用し、複数のエージェントに役割を明確に割り当てることで機能しています。特に重要なのは、各エージェント間の「分業の境界線」をどう設計するかという点です。スカウトが定性的な判断(トレンドのエネルギーやトーン)を行い、アーティストがそれを視覚的なアウトプットに変換するというように、各AIの得意分野を連携させることで、システム全体のパフォーマンスを最大化しようとしています。

結論

今回の実験は、AIエージェントが単なるツールではなく、ビジネスプロセス全体を自動化する「実行主体」となり得ることを実証しました。この技術は、小売業における市場投入スピードの劇的な向上や、新しいビジネスモデルの創出に大きな影響を与える可能性を秘めていると見られています。

原文の冒頭を表示(英語・3段落のみ)

I love getting my hands on technology. Not to review it from a distance, but to actually build with it, break it, and figure out what it can really do. That hands-on instinct is what lets me translate new technology for the retailers and brands I work with, because I have lived the experience rather than just observed it.What I am noticing lately is that the experience of building has changed considerably. For most of my career, turning a software idea into something real meant months of engineering work before you could even tell whether the concept was worth pursuing. The creative and strategic thinking always had to wait on the technical execution, and that dependency shaped how retail organizations approached innovation. Big ideas needed big teams and long timelines to match.That is much less true today. AI agents have shifted the economics of building to the point where getting something working is no longer the primary challenge. The harder and more interesting question is what to build in the first place, and whether the idea is genuinely worth pursuing.I wanted to test this personally rather than take it on faith, so I picked a concept, chose a platform, and started building. What followed was instructive in ways I did not fully anticipate.The concept I landed on was a t-shirt marketplace that could operate almost entirely on its own. The idea was straightforward: popular culture moves fast, and there is a narrow window between when a meme, slogan, or cultural moment starts trending and when the broader market catches up to it. If you could close that window automatically, sourcing the trend, turning it into a design, and getting it in front of buyers within hours rather than days, you would have something genuinely useful as a retail proof of concept.I chose t-shirts specifically because the product is simple, the creative surface is well-defined, and platforms like RedBubble already handle the printing, fulfillment, and sales infrastructure. That meant I could focus the experiment entirely on the upstream question: could agents handle the creative and operational pipeline from trend discovery to live product listing with minimal human involvement?What appealed to me about this idea beyond the technical challenge was that it mapped cleanly onto problems real retailers face. Speed to market, staying culturally relevant, reducing the manual effort involved in getting new products live, these are not abstract concerns. They come up in almost every conversation I have with retail and consumer goods organizations. A meme t-shirt store is a deliberately playful test case, but the underlying mechanics translate directly to more serious retail contexts.So I gave myself a simple brief: build a pipeline using AI agents that could scout for trending content, generate original artwork from it, and publish finished products to RedBubble for sale. No inventory, no logistics, no manual design work. Just a pipeline that runs and produces something real at the other end.For the orchestration layer I chose GitHub Copilot CLI, which lets you describe what you want an agent to do in plain language and then watches it reason through the steps, write the code, execute it, and course-correct when something does not work as expected. If you have not used an agentic coding tool in this mode before, it is a genuinely different experience from a standard coding assistant. You are not asking it to complete a function or suggest a fix. You are giving it a goal and watching it figure out how to get there.I want to be clear that the choice of platform here is somewhat secondary to the broader point. GitHub Copilot CLI is what I used, but the same experiment could be run with Claude Code, Cursor, or any of the other agentic development environments that have emerged over the past year. The category has matured quickly and the meaningful differences between tools at this point are mostly about workflow preference rather than fundamental capability.What mattered for this experiment was having an environment where I could define each agent’s purpose clearly, wire them together into a sequential pipeline, and iterate quickly when the output needed adjustment. GitHub Copilot CLI handled all of that without much friction, which meant I could spend my time thinking about the design of the system rather than the mechanics of running it.The pipeline I designed consists of three agents, each with a distinct responsibility, passing its output to the next in sequence. Designing the division of labor between them turned out to be one of the more interesting parts of the exercise, because the boundaries you draw between agents matter quite a bit for how well the overall system performs.The Scout is the first agent in the pipeline and runs on Claude. Its job is to monitor the internet for content that is gaining traction rapidly, specifically memes, slogans, cultural references, and social media moments that have the kind of energy that translates well onto a t-shirt. This is not a simple search task. It requires some judgment about what is genuinely trending versus what is merely popular, and what kind of content has the right tone and portability to work as a wearable design. Claude handles this well because the task is fundamentally about reading context and making qualitative assessments, which is where a language model adds real value. The Scout produces a structured brief for each trend it identifies, including the core concept, the emotional register, and suggestions for how it might be interpreted visually.The Artist is the second agent and runs on OpenAI’s image generation model. It receives the brief from the Scout and converts it into original artwork sized and styled for a t-shirt. Prompt engineering matters here more than anywhere else in the pipeline. Getting the Artist to produce something that feels designed rather than generated, with clean lines, strong contrast, and the kind of visual confidence that works on fabric, required iteration on how the Scout framed its briefs. Once that handoff was tuned, the output quality improved considerably. The Artist does not just produce an image; it produces something ready to go directly into a product listing.The Publisher is the third agent and returns to Claude, this time paired with Playwright, a browser automation framework. Its job is to take the finished artwork from the Artist and upload it to RedBubble as a live product listing, complete with a title, description, tags, and pricing. Playwright gives the agent the ability to navigate a real web interface, fill in fields, upload files, and submit listings in the same way a person would. Claude drives that process by interpreting the page state, deciding what action to take next, and handling the variability that comes with automating a live website. The Publisher is the most operationally complex of the three agents, but once it was working reliably it required the least ongoing attention.Together the three agents form a pipeline that can move from a trending moment on social media to a live product available for purchase in a fraction of the time it would take a human to do the same work manually.For all the autonomy in this pipeline, there is one step that still requires a person: logging into RedBubble.Like most consumer platforms, RedBubble has protections in place that detect and block automated login attempts. The Publisher agent can do everything else, navigate the upload interface, populate the product details, configure pricing, submit the listing, but it cannot authenticate itself. That first step requires a human to log in manually, after which the agent takes over and handles the rest of the session without interruption.Fully autonomous pipelines that interact with third-party platforms will almost always encounter a moment like this one. It might be a login, a CAPTCHA, a two-factor authentication prompt, or a terms-of-service confirmation. These friction points exist for good reasons, and they are not going away. The practical implication for anyone building agentic systems is that designing for a human handoff at specific, predictable points in a workflow is not a failure of the system. It is a realistic and sensible way to architect it.In this case the handoff takes about thirty seconds. After that the pipeline runs on its own. For a retail organization thinking about where agents can reduce operational overhead, that kind of ratio, one brief human action enabling a largely automated workflow, is worth taking seriously. The goal does not have to be zero human involvement. It has to be the right human involvement at the right moment.A meme t-shirt store is a deliberately lightweight test case, but the mechanics underneath it are directly relevant to how retail organizations think about speed, relevance, and operational efficiency.The first thing this experiment demonstrates is how much the cost of experimentation has dropped. Standing up a product pipeline that scouts for opportunities, generates creative assets, and publishes listings would have required a meaningful engineering investment even a couple of years ago. The version I built took a fraction of that time, with no dedicated engineering team and no bespoke infrastructure. For retailers who have historically been cautious about committing resources to speculative projects, that change in economics opens up a different kind of conversation about what is worth trying.The second thing it illustrates is the potential for cultural agility at scale. One of the persistent challenges in retail is the lag between what customers are responding to in culture and what is actually available to buy. Trend cycles have accelerated considerably while most product development and merchandising workflows have not kept pace. An agentic pipeline that monitors cultural signals continuously and can move from insight to live product in hours rather than weeks represents a genuinely different capability, one that is particularly relevant for categories like apparel, accessories, novelty products, and anything where timeliness is part of the value proposition.There is also something worth noting about the role of human creativity in this kind of system. The agents handle execution, but the design of the pipeline, the judgment calls about what makes a good brief, the decision about which trends are worth pursuing and which are not, those reflect human thinking. The system does not replace creative and strategic judgment. It removes the operational friction that has historically slowed that judgment down. That is a meaningful distinction for retailers who are weighing how AI fits into their teams and workflows rather than around them.None of this requires a meme t-shirt store to be useful. The same pattern, continuous sensing, automated creative production, and streamlined publishing, applies to personalized promotions, dynamic product descriptions, localized marketing content, and a range of other retail use cases where speed and volume matter. The t-shirt store just makes the architecture easy to see.The most valuable thing this experiment gave me was not a functioning pipeline. It was a change in how I thought about the problem from the beginning.When I started, my instinct was to think about the technical steps: which tools to use, how to connect them, what the data flow would look like. Within a fairly short time, that thinking became almost irrelevant because the platform handled most of it. What I found myself doing instead was thinking carefully about the concept, the logic of the system, what each agent needed to know, and what a good output actually looked like at each stage. The creative and strategic thinking moved to the front, where it belongs.That experience is worth sitting with if you work in retail or consumer goods. The question of what your organization could build with agentic AI is genuinely open right now, and the barrier to finding out has never been lower. You do not need a large engineering team or a multi-year roadmap to run a meaningful experiment. You need a specific problem, a clear sense of what good looks like, and enough curiosity to start.The use cases I hear most often from retailers involve things like automated competitive monitoring, dynamic content generation, personalized outreach at scale, and streamlining the operational handoffs that slow teams down. All of those are tractable with the tools available today, and all of them follow a similar pattern to what I built here. The underlying architecture of sensing, generating, and acting is flexible enough to map onto a wide range of real business problems.So the question I would leave you with is a practical one. If the mechanics of building were largely taken care of, and your primary job was to think clearly about what you wanted the system to do, what would you build? That is not a hypothetical anymore. It is the actual situation most of us are in right now, whether we have fully recognized it yet or not.This is not just a thought experiment. The store is real, it is live, and it is the direct output of the pipeline described in this post.You can browse it at redbubble.com/people/PeaceFrogSEA. The designs you see there, from AI and tech culture references to Gen Z slang to pop culture moments, were sourced, designed, and uploaded by the three agents working in sequence. No human picked the trends, briefed a designer, or manually built the listings. The Scout found the content, the Artist made the artwork, and the Publisher put it on the shelf.The store currently carries 16 designs across clothing, phone cases, stationery, and accessories. Some of the titles give a reasonable sense of what the Scout was paying attention to: “Manufactured Relevance,” “Delulu Is The Solulu,” “Ate and Left No Crumbs,” “Will Prompt For Coffee.” These are not designs that came from a creative brief written by a person. They came from a pipeline that was watching what people were talking about and responding to it.The experiment is ongoing. New designs get added as the agents run, which means the store reflects whatever is moving through culture at the time the pipeline executes. That cadence, continuous and automatic, is the point.No posts

※ 著作権に配慮し、引用は冒頭3段落までです。続きは元記事をご覧ください。