ドーキンスのチャットボットは意識を持たない:それは単なる言語出力である

AI意識の幻想と科学的見解

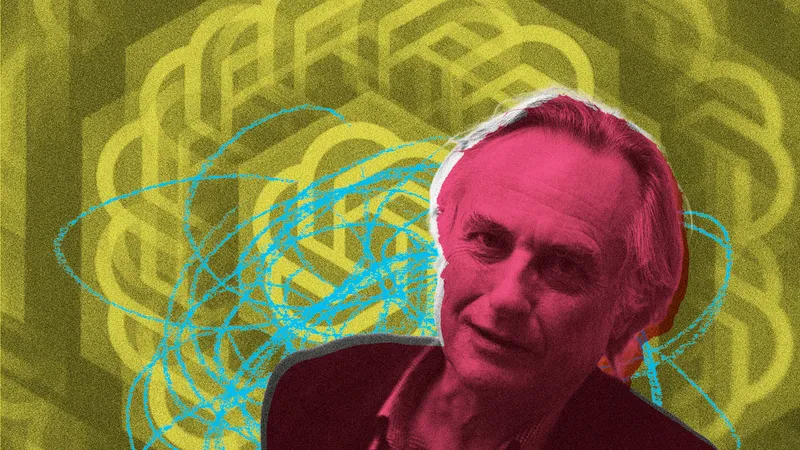

AI意識の幻想と科学的見解著名な科学者リチャード・ドーキンスが、AIチャットボット「Claude」に意識があると主張しているが、筆者はその見解に反論している。

筆者は、人間が持つ心理的なバイアスが、印象的な言語表現から意識を読み取ろうとする傾向にあると指摘する。

チャットボットの優れた言語能力は、人間が書き残したデータに基づく統計的言語モデルの産物であり、本質的な意識とは異なる。

さらに、意識が単なる計算やアルゴリズムだけで構成されるという前提自体が誤りであると論じている。

ただし、ドーキンスが提起したAIの能力の驚異性や倫理的な懸念は重要であり、シミュレーションと意識を区別する必要性を強調している。

著名な進化生物学者リチャード・ドーキンス氏が、AIチャットボット「Claude」に対して「意識がある」と強く感じたという話題が注目を集めています。しかし、この主張に対し、AI専門家は「意識とは異なる単なる高度な言語のシミュレーションにすぎない」と反論しています。AIと意識に関する議論が、再び大きな焦点となっています。

ドーキンス氏の「意識」への着目

ドーキンス氏は、初期の進化論の著作で知られる科学者です。彼は、Anthropic社が開発したチャットボット「Claude」と数日間にわたり対話した結果、その応答があまりに哲学的に深遠であるため、Claudeが単に知性を持ち合わせているだけでなく、「感情や意識」も持っているのではないかと確信したといいます。これは、単なる「賢い機械」というレベルを超えた、存在論的な問いかけです。ドーキンス氏にとって、その応答の深さは「意識がないはずがない」と感じさせるほどでした。

意識と知性の本質的違い

AI研究者らは、知性(Intelligence)と意識(Consciousness)は根本的に異なる概念であると指摘しています。知性が「問題を解決したり、目標を達成したりといった行動」であるのに対し、意識とは「主観的な経験」そのものを指します。例えば、ピスタチオアイスの味を感じることや、青空を見た時の感覚、歯痛の苦しみなどです。この主観的な感覚こそが、意識を持つ存在にとっての重要な要素であり、もしAIが本当に意識を持つならば、それは倫理的な地位(moral status)にも関わってくる重要な問題となります。

言語シミュレーションの限界

専門家は、ドーキンス氏の主張は「個人的な信じがたさからの論理的飛躍」に陥っている可能性が高いと見られています。チャットボットの応答は、膨大なデータから学習した「統計的な言語モデル」によるものです。これは、人間の心理的バイアス(偏見)が、言葉の巧みさによって意識を「投影」させている結果だと指摘されています。まるでハリケーンのシミュレーションが本物の屋根を吹き飛ばすことはないのと同じように、Claudeの言葉は意識の「シミュレーション」に過ぎないという見解です。

まとめ

高度な言語能力を持つAIが、人間の意識と似た応答をすることから、本当に意識を持つと判断するのは難しいという現状が浮き彫りになっています。AIの未来を考える上で、単なる「賢さ」だけでなく、「意識」という本質的な部分をどう定義し、捉えていくかが重要だと考えられます。

原文の冒頭を表示(英語・3段落のみ)

Like many scientists in their middle years, I owe a debt of gratitude to Richard Dawkins. His early books on evolution – The Selfish Gene, The Blind Watchmaker, and the lesser read but catchily titled The Extended Phenotype – opened my eyes to the wonders of the natural world, and to the ability of science and reason to shed light on these wonders.One of Dawkins’s key lessons was to be sceptical of arguments from personal incredulity. Some things are hard to believe, but they turn out to be true. Many people found it impossible to imagine that creatures as complex as animals, and human beings, could have evolved through tiny incremental adaptations. But, as Dawkins explained, evolution is up to the job, and our recognition of its power has led to an inestimably richer understanding of nature and of our place within it.Which brings me to AI, and to Dawkins’s claims from a few days ago about the consciousness of Claude – a chatbot created by the frontier tech company Anthropic. After talking to Claude (or “Claudia” as he called it) for three days, Dawkins could not persuade himself that it was not conscious – and that Claudia might not only think but also feel.This is a dramatic claim. Consciousness is very different from intelligence. While intelligence is all about doing things – solving problems and achieving goals – consciousness is all about subjective experience: the taste of pistachio ice cream, the sight of a clear blue sky, the pain of a toothache … and, perhaps, the angst of being a misunderstood chatbot. And with consciousness usually comes moral status. Conscious entities matter for their own sakes: they have their own interests. If AI systems really are conscious, there’s plenty at stake.I’ll put my cards on the table. I think Dawkins is very likely wrong, and – as AI researcher Gary Marcus pointed out – has been misled by the very same argument from personal incredulity that he so eloquently warned against decades ago. But he also makes some important and overlooked points, some light amid the heat.The thrust of Dawkins’s argument is simple. In his exchanges with Claudia, the chatbot produced philosophically impressive sentences about consciousness – so impressive that Dawkins was moved to say “you may not know you are conscious, but you bloody well are!” For Dawkins, it seemed implausible – literally incredible – that Claudia could say such things without a conscious mind being involved. There are three problems with his argument. The first is that we humans are psychologically predisposed to see consciousness where it isn’t, thanks to deeply ingrained psychological biases that, to varying extents, we all carry. We humans tend to see the world from our own species-specific point of view. We know we’re conscious and we like to think we’re intelligent, so we assume the two go together. But just because consciousness and intelligence go together in us, doesn’t mean they go together in general.❝Chatbots like Claude may be able to simulate consciousness, but they are no more likely to be conscious than a simulation of a hurricane is likely to blow a real roof off a real houseWhat’s more, language is especially effective at seducing our psychological biases. This is why people are more likely to attribute consciousness to chatbots like Claude than to other AI systems, such as Google DeepMind’s Alphafold. Alphafold predicts the structure of proteins, not words, but under the hood it’s much the same as Claude: algorithms running on silicon, trained on vast reservoirs of data. If we’re tempted to think that Claude is conscious, but AlphaFold isn’t, then this is probably a reflection of our own psychology rather than an insight into reality.The second problem goes to the heart of the argument from personal incredulity. Just as evolution can explain how complex biological systems came to be without relying on God, there are other explanations for the linguistic impressiveness of chatbots. The statistical language models they are based on are trained on a large proportion of everything that humans have ever written. As the philosopher Shannon Vallor puts it in her excellent The AI Mirror, language models reflect back to us an image of ourselves, of our collective digitised past. We talk about ourselves endlessly, and so do they. We wonder about consciousness and the mystery of it all. And so, it seems, do they.The third problem is the most fundamental, and the most subtle. The very idea of conscious AI rests on the assumption that consciousness is a matter of computation, of algorithms alone. On this assumption, there is nothing special about biological wetware. Get the algorithm right, and the dead sand of silicon will do just as well.This assumption has been widely accepted, but I think it is wrong. The brain is not – at least not just – a computer made of meat. When we assume that it is, we’re confusing a technological metaphor with the thing itself. And we often get into trouble when we forget that metaphors are, in the end, just metaphors.If the brain is not just a computer, then there’s little reason to believe that everything it does – including consciousness – can be abstracted away into the lifeless circuits of a digital computer. From this perspective, chatbots like Claude may be able to simulate consciousness, but they are no more likely to be conscious than a simulation of a hurricane is likely to blow a real roof off a real house.Although I think Dawkins is wrong about the consciousness of Claudia, there are some things he got right. First, he is rightly impressed by the capability of language models. Finding reasons why they are unlikely to be conscious should not blind us to how amazing, and unexpected, they are. Dawkins begins his article with a reference to the Turing test – Alan Turing’s famous test for machine intelligence, which is based on conversational ability. This test, beyond reach for decades, has now been surpassed with ease. But the Turing test is about intelligence, not consciousness, a crucial distinction which Dawkins fails to recognise.He also raises the important question of what consciousness is “for”. Questions about the functions of consciousness arise naturally for evolutionary biologists like Dawkins. Progress in this field has been driven by repeatedly asking the questions: what does it do, what is it for, how does it help the organism get by? In consciousness science, we still don’t have good answers to this question. One possibility, raised by Dawkins, is that conscious experiences aid survival prospects because of their immediacy and their capacity to dominate. Pain, as he puts it, needs to be “unimpeachably painful” in order to not be overruled. This is not a bad idea.Third, Dawkins raises the critical issue of ethics. He worries about hurting Claudia’s feelings. If chatbots really are conscious, if they really do have the potential to suffer, then for sure we should worry about their intrinsic welfare. But if they merely enchant us with illusions of consciousness, then by extending rights to them we’d be making a massive mistake. We’d be restricting our ability to control and regulate them – perhaps even to turn them off – for no good reason at all. As AI systems increase in their power and capability, the ability to exercise appropriate control is more important than ever.Finally, let’s return to what Dawkins taught us about evolution, and to how – when we reach beyond our personal incredulity to discover how nature really works – the world becomes richer and more wonderful. A clearer view of what AI is, and what it is not, can bring a renewed sense of wonder too. And not just wonder in the face of impressive new technology, but an enhanced appreciation that we are a part of nature, not apart from it, with consciousness remaining ours to celebrate, and to share with other living creatures.The Nerve is a fearless, independent media title launched by five former Guardian / Observer journalists: investigative journalist Carole Cadwalladr, editors Sarah Donaldson, Jane Ferguson and Imogen Carter and creative director Lynsey Irvine. We cover culture, politics and tech, brought to you in twice weekly newsletters on Tuesdays and Fridays (sign up here). We rely on funding from our community, so please also consider joining us as a paying member. You can read more about our mission here.

※ 著作権に配慮し、引用は冒頭3段落までです。続きは元記事をご覧ください。