Apache Cassandra 压缩优化:直接 I/O 提升读延迟 p99 性能 5 倍

Apache Cassandra 6 的一项新补丁引入了直接 I/O 技术,用于优化数据压缩过程,显著降低了读延迟。

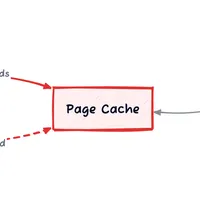

该技术绕过页缓存机制,避免了压缩过程对页缓存的污染,缓解了由于缓存污染导致的性能瓶颈。

通过直接 I/O,压缩读取路径的 p99 读延迟降低了 5 倍,平均读延迟提升了 1.8 倍,且显著减少了由于内存压力引起的系统停顿时间。

该优化方案的优势在于避免了内核页缓存带来的额外开销,提升了 Cassandra 的整体性能。

查看原文开头(英文 · 仅前 3 段)

A patch I contributed to Apache Cassandra 6 cuts p99 read latency by 5x during compaction.Compaction pollutes the page cache with data the application knows is throwaway, but the kernel does not. Compaction is unavoidable, the price Cassandra pays for fast writes. Data isn't sorted on the way in; it's sorted later, in the background, by merging files on disk.Reducing compaction throughput or increasing node memory can dampen the effect on tail query latencies. The first costs throughput, the second costs money. Both are compromises. Direct I/O allows Cassandra to live in better harmony with its own housekeeper, bypassing the page cache entirely for compaction reads.Linux Page CacheAny time a file-based read or write occurs (typically via read() and write() system calls), data passes through the page cache, a kernel-managed in-memory cache between the application and storage device.The kernel manages this through two LRU (least-recently-used) lists: an active list and an inactive list. Hot pages live on the active list; cold or read-once pages remain on the inactive list as first candidates for eviction.Buffered I/O: compaction and queries share the page cacheBuffered I/O works well for most applications, benefiting reads through caching and readahead, and writes through deferred, coalesced flushes, freeing the developer from reasoning about I/O sizing and access patterns.For most workloads, the kernel makes good decisions. Not all workloads are most workloads.The page cache is a sacred space, best populated with data likely to be re-accessed soon, or writes that benefit from coalescing before hitting disk.Compaction and the Page CacheCompaction, which merges multiple SSTables into a single SSTable, is a prime example of a page cache pollutant. Input SSTables are read sequentially and discarded; the output SSTable is written in a single sequential pass. Both reads and writes flood the page cache with data unlikely to be accessed again, displacing legitimate hot-page candidates.Displacement alone would be costly. The cost of eviction makes it worse.Clean, read-once pages from the input SSTables can be dropped immediately. Dirty pages of the newly written SSTable must first be flushed to disk before eviction is possible. Buffered writes of single-use pages are more expensive than buffered reads, and the reclaimer pays that expense.A clean page costs nothing to evict; a dirty page costs a disk write.kswapd, the kernel's background memory reclaimer, scans the LRU lists and evicts pages to keep utilisation within configured watermarks. Pages on the inactive list survive only if accessed between scans; repeated accesses earn promotion to the protected active list.Under memory pressure kswapd cycles faster, shrinking the promotion window. When allocations outpace reclamation, free memory falls below the min watermark and the kernel stalls the allocating thread. This is direct reclaim: the thread must free pages from memory itself before its allocation can proceed, blocking the triggering operation.For the compaction thread, a tolerable delay. For a critical read query that triggers a cache miss and must load pages from disk, it is not.Inflated tail latencies are inevitable. The kernel and Cassandra each have mitigations. Neither is enough.Existing MitigationsThe kernel's active/inactive page cache split provides some hot page protection. Read-once pages are contained in the inactive list. Premature eviction of hot page candidates remains the problem.Cassandra uses FADV_DONTNEED to hint to the kernel that compaction pages can be dropped, but only once an SSTable is fully processed. The pollution occurs during processing; the hint arrives too late.FADV_DONTNEED was adopted in 2010 in this Jira after both fadvise and Direct I/O were evaluated. Direct I/O showed no improvement in average read latency, the metric of focus at the time, but the wrong one.Introducing Direct I/ODirect I/O allows the application to read and write directly between disk and a userspace buffer, bypassing the page cache entirely. It requires both disk operations and off-heap memory buffers to be aligned to the filesystem block size.Control of disk operations is transferred from the kernel to the application, eliminating writeback storms and protecting the page cache from pollution by readahead and read-once workloads.Compaction is a prime candidate for Direct I/O on both the read and write path, with the read path addressed in this post. Input SSTables are read-once by definition; once compaction completes, that data will never be accessed again. The output SSTable, while not throwaway, is unlikely to see much read traffic. Freshly written SSTables are typically superseded by further compaction before they see meaningful access. Neither benefits from page cache residency.The loss of kernel readahead is mitigated by Cassandra's own chunk readahead buffer, introduced in Cassandra 5 by Jon Haddad and Jordan West. Jon Haddad, a long-time Cassandra contributor and consultant who writes on Cassandra internals at his blog, also filed the Jira to bring Direct I/O support to the compaction read path.I picked up the work, landing in this PR targeting Cassandra 6.BenchmarkingEnvironment: Ubuntu 22.04, Linux 6.8.0-106-generic, 6 GB cgroup, 3 GB heap (~3 GB page cache). RAID1 NVMe, readahead 4 KB. Classic active/inactive LRU (MGLRU disabled).Data: Cassandra 6.0-alpha2-SNAPSHOT, 2×65 GB SSTables (chunk_length_kb=4). Major compaction with cursor compaction enabled (default), unthrottled.Workload: 10K reads/s across a variable number of hot partitions (100K–10M, ~100 MB–10 GB). Page cache dropped and Cassandra restarted before each run.Headline numbersStarting with the 100 MB hot set, comfortably within the 3 GB page cache:

Metric

Direct I/O

※ 出于版权考虑,仅引用前 3 段。完整内容请阅读原文。