规模化文本梯度:突破强化学习的奖励瓶颈

传统的强化学习(RL)算法依赖单一的奖励信号来优化策略,这会导致信息丢失,效率低下。

本文指出,这种方法限制了从专家反馈中学习的能力,因为专家可以提供更详细的失败模式和改进建议。

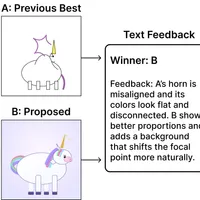

研究人员正在探索一种新的学习范式,即“文本梯度”方法,该方法利用文本形式的丰富反馈信息来引导模型改进,避免奖励信号的“瓶颈”效应。

这种方法包括基于批评的方法(直接针对错误进行指导)和进化方法(通过语言模型生成和选择改进的变体)。

通过利用文本反馈,可以实现更高效的目标优化,例如药物发现等领域,避免传统强化学习中的随机探索,取得更好的结果。

查看原文开头(英文 · 仅前 3 段)

RL Throws Away Almost Everything Evaluators Have to Say

When you get feedback on your work, it usually tells you what went wrong and how to fix it. But existing reinforcement learning (RL) algorithms throw most of that information away; it compresses potentially rich feedback into a single number, a reward1, then tries to learn by correlating rewards with actions across hundreds or thousands of attempts. We do this because our algorithms were designed for scalar supervision, not because of a fundamental constraint in learning from experience2.

To illustrate this, let’s consider a simple example. Suppose you’re judging cakes. You take a bite, you like the shavings on top, the ganache is perfectly tempered, but you want way more cherries throughout. Yet you only record: “4/5.” The baker learns nothing about the cherry distribution or what else worked well, only that this cake scored higher than a 3. If this is the only information you provide, the baker will likely have to do a lot more baking to figure out what you actually want. 3

※ 出于版权考虑,仅引用前 3 段。完整内容请阅读原文。